This content is brought to you by Knoa Software, a leading provider of employee user-experience management and analytics for Oracle cloud, SAP and others. Learn more

With every such cycle, the team responsible for the upgrade needs to undertake several routine tasks:

- Reviewing upcoming changes and assessing the potential impact on users, or existing business processes

- Performing user acceptance testing (UAT) in a sandbox environment, in preparation for the production rollout

- Preparing and disseminating documentation and other self-help materials in case of significant UI or process changes

- Assessing the business impact of issues introduced by the upgrade, to adequately allocate IT resources towards their resolution

- Finally, engaging a fast response team to deal with the issues deemed to be critical

The team tasked with managing this process can be easily overwhelmed, without timely access to the right information to enable them to prioritize their efforts.

One technique that helps with this challenge is deploying a user experience monitoring solution, which collects real-time analytics on user behavior and experience with the Oracle Cloud applications. This data brings instant visibility into what changed during an upgrade, how it affected real users, what issues matter the most, and how effective the response is.

In this article, we will illustrate how the team in charge of supporting Oracle Cloud upgrades, which typically consists of IT and business stakeholders, can leverage user analytics to make their lives easier, as well as deliver a better quality of service to the actual end users of the system.

Let’s review each of these tasks and see how using a tool like Knoa UEM for Oracle Cloud can help.

1. Assess the impact of upcoming changes

With every upgrade, Oracle customers can review detailed release notes for Oracle Cloud, to determine which application functions, processes, or UI components are being changed, across the entire spectrum of Oracle Cloud applications. They key question for customers preparing for an upgrade is always this: how will these changes impact my users? In a similar vein, how should I decide which innovations to turn on right away, and which ones should stay disabled?

There is no straightforward way to answer this – unless you know how these functions, processes, or UI components are currently being utilized in your environment. This requires that you have a capability to track actual usage across all your users, and all your Oracle Cloud applications. Virtually any activity report in Knoa Analytics will give you this information.

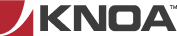

As an example, the “Impacted Users” report enables you to identify individual user segments, as well as individual users, with the highest level of utilization for a specific set of Oracle functions. Filter this report for the functions impacted by an upcoming upgrade, to identify the user population who will be impacted the most.

2. Perform user acceptance testing for the new release

With unlimited time and resources, the ideal UAT cycle will attempt to test everything that changed with an upgrade. Obviously, that’s rarely the case – so how do you prioritize what to test?

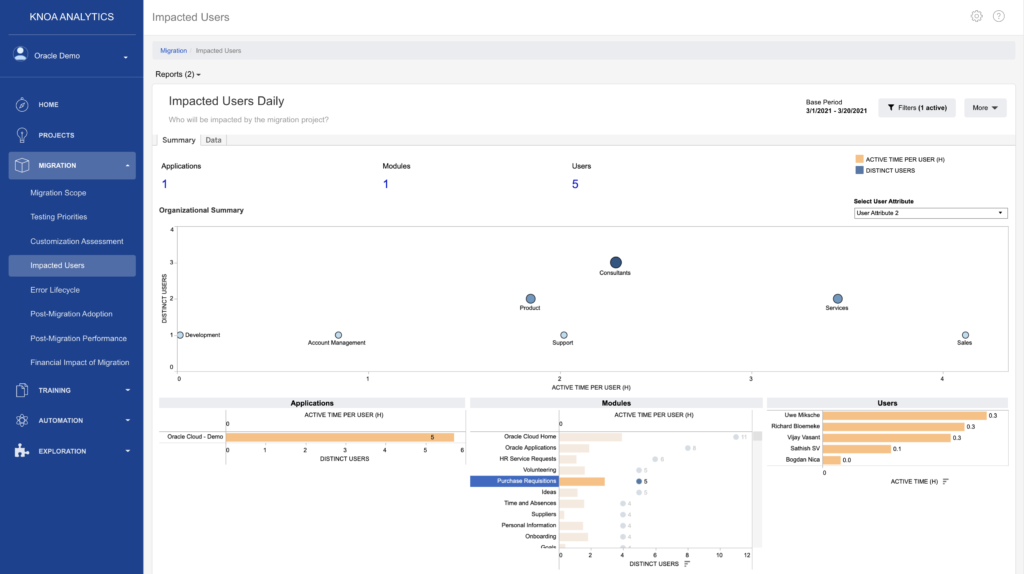

Same usage analytics give you that answer. Simply rank the Oracle functions affected by an upgrade according to their current level of utilization, to isolate the set that you need to focus on. When looking to rank those functions, you can consider key utilization metrics, such as number of users touching those functions on a routine basis and cumulative active time spent on those functions, as well as additional metrics, such as overall transaction performance and error rates.

The Knoa Analytics report “Testing Priorities” enables you to isolate specific Oracle screens that should be included in your UAT cycle, based on set criteria, thus limiting your testing scope and effort only to what is important. The UAT team will thank you later…

Having defined your scope, you can now begin your testing. Several new questions come into play:

2.1. Who should be co-opted in the UAT cycle?

The typical makeup of a UAT team consists of functional experts of the Oracle applications, also known as power users or super users. Which begs the question: who are these users?

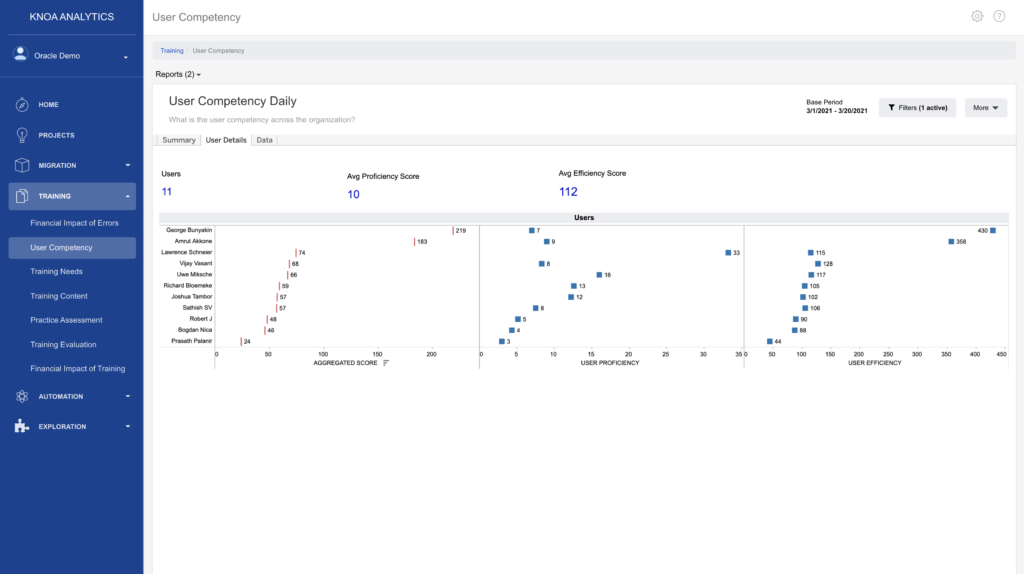

The “User Competency” report enables you to rank Oracle Cloud users by 2 levels of competency (user proficiency and user efficiency), which helps you identify your power users. You can go one step further and generate this report only for the functions that are included in the UAT scope – giving you the power users who you need to recruit for each UAT cycle.

2.2. How can you determine that the actual testing execution hit all the right targets?

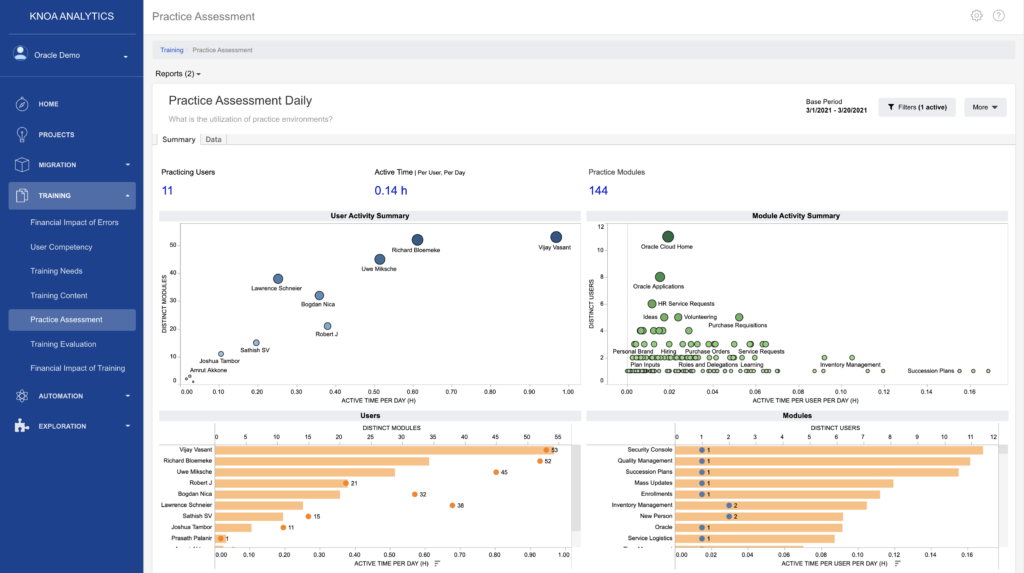

Trust, but verify. To determine if your UAT cycle has been on target, use the “Practice Assessment” report. Filter the report for just your UAT users, performing testing activities in the UAT environment, and you will get a clear picture of how the individual Oracle Cloud functions within your testing scope have been exercised as part of the UAT cycle. Use this information to address any lapse in coverage.

2.3. How can you easily generate a consolidated list of issues found during the UAT?

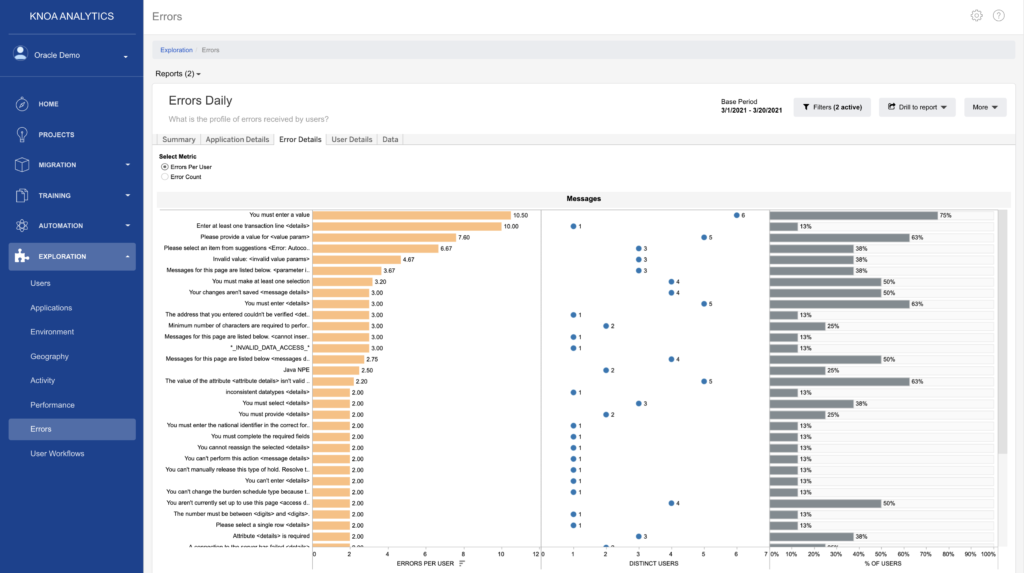

In addition to utilization metrics, Knoa Analytics also gives you access to a complete list of errors encountered while exercising the application. Use any of the error based reports, such as the “Errors” report below, similarly filtered for your UAT testers and UAT environment, to see aggregated views of all error conditions surfaced during the UAT. Rank these issues – by frequency, by tester, or by the Oracle Cloud functions in which they were encountered – to isolate the ones you need to focus on.

Of course, you can still go through your UAT cycle without this information, but… why would you?

3. Preparing and disseminating information about upcoming changes

Some people may like change. How about those who don’t? Taking advantage of Oracle’s quarterly innovation cycle requires you to embrace change and ensure that your Oracle users can embrace it as well.

All the analytics leveraged up to this point (usage analytics, error analytics) feed directly into this next effort, of preparing the larger user population for the upcoming changes. You already know who will be impacted the most, and now you also know which particular changes they need to be made aware of. You can rely on this information to identify self-service artifacts (work instructions, FAQ’s or other “tips and tricks”) that should be disseminated among your team, to enable them to successfully adopt new features or adjust to changes made to existing ones.

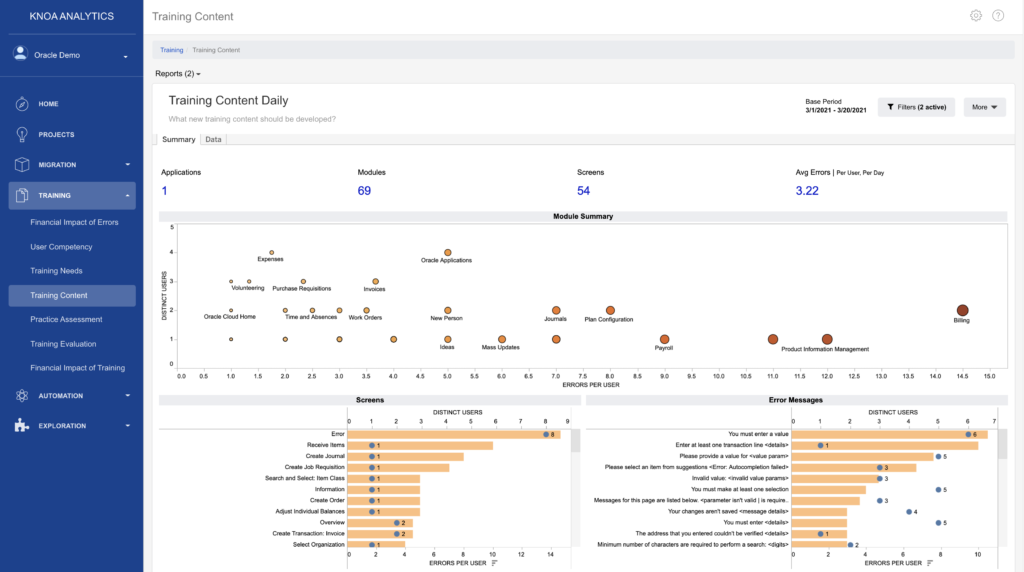

As an example, the “Training Content” report helps you identify Oracle Cloud functions where you need to prioritize self-help materials, based on the volume of user generated errors, which are errors that users themselves generate in the application, as opposed to errors caused by configuration or technical issues in the environment.

4. Assessing the business impact of issues introduced by the upgrade

Perhaps the most important question with any upgrade is this: How has the upgrade impacted my users, their experience, and their productivity? Oftentimes, the question is never asked. And even when asked, a clear answer is hard to come by.

With a user analytics solution in place, you have empirical data to enable you to measure changes in user adoption, user proficiency, and user experience – before and after the upgrade. There are no more guessing games. And most importantly, no more subjectivity. If your job is to ensure a smooth Oracle Cloud upgrade, you now have a reliable, objective way to measure its impact, every time it happens.

Let’s dissect this a bit further.

4.1. How has the upgrade impacted user adoption?

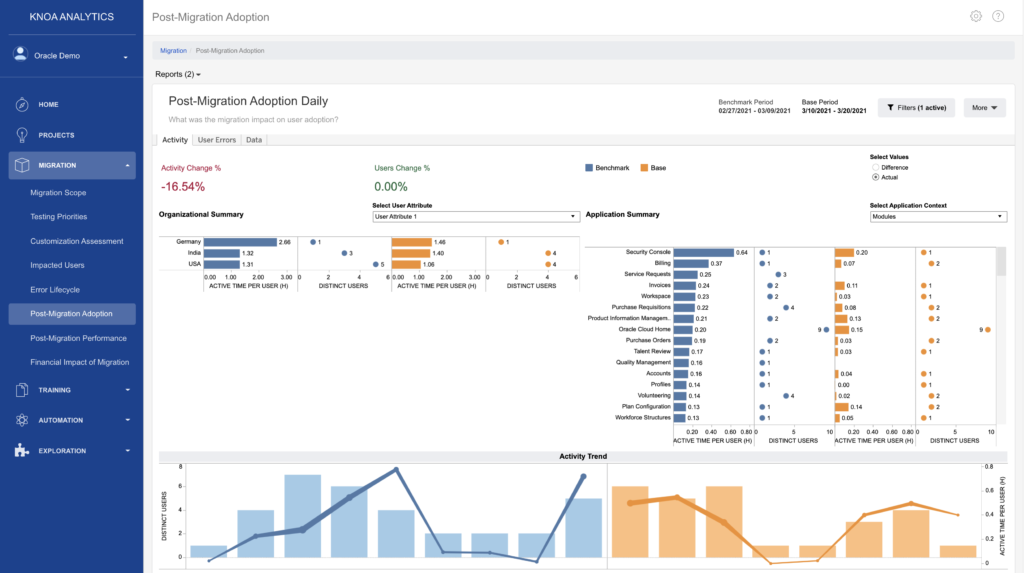

A sudden drop in user adoption, or sudden increase in errors, post-upgrade, is a bad omen. The “Post-Migration Adoption” report helps you assess the impact of the upgrade on user adoption, such as user activity levels and user error rates. Here you can isolate any negative impact down to individual users or Oracle Cloud functions, so you can be very focused in your remediation.

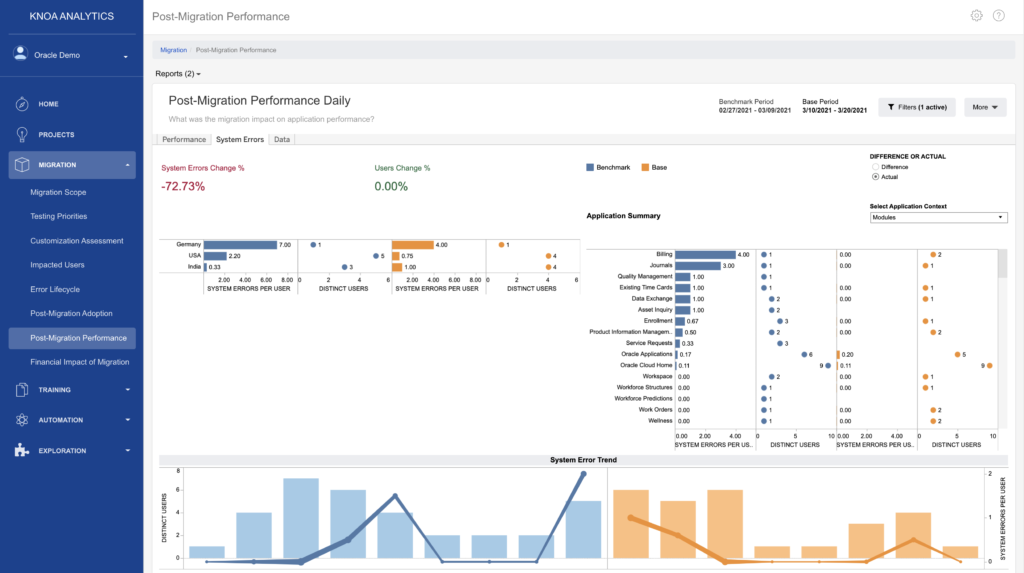

4.2. How has the upgrade impacted user experience?

Similarly, a sudden degradation in performance, post-upgrade, should raise an immediate red flag. The “Post-Migration Performance” report helps you assess the impact of the upgrade on user experience, by looking at the overall response time for user initiated actions, as well as system errors, which are caused by configuration or technical issues in the environment. To help with triaging of these issues, the report lets you identify individual users and functions where experience has degraded after the upgrade.

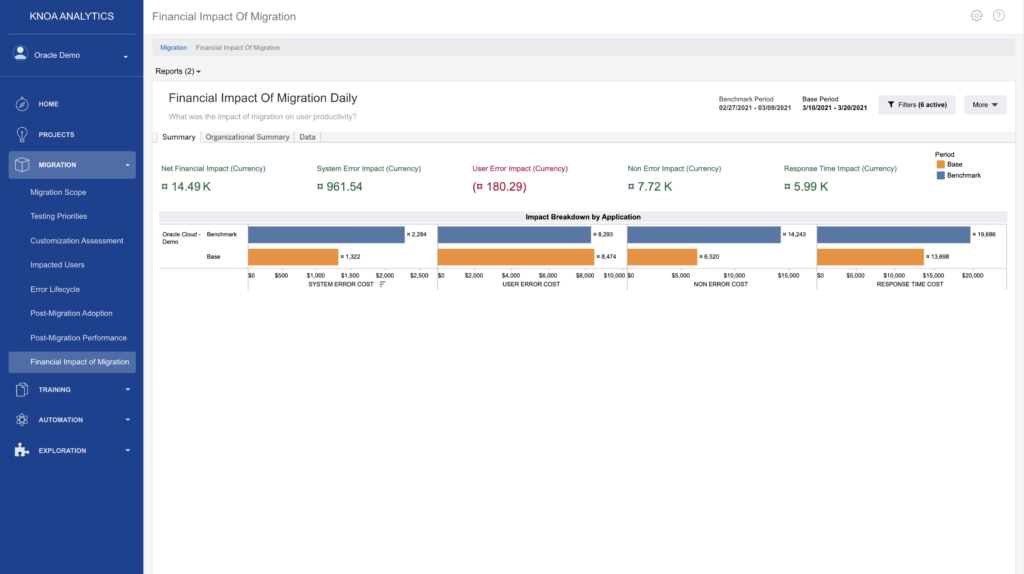

4.3. Finally, what was the overall impact on user productivity?

What was the net impact of the upgrade on user productivity, measured by productive time spent in the application? The “Financial Impact of Migration” report gives you the business impact of post-upgrade issues, in terms of their true financial impact on user productivity. Since every application slowdown, or every error encountered, translates into a certain “time loss” for the user, it is straightforward to generate an aggregate view of lost productivity, and its financial cost.

5. Fast response to identified issues

No matter how robust your change management process is, you may still run into production issues, that will find their way to your Service Desk or Help Desk. What information is available to your support team to deal with these issues in an expedient and efficient manner?

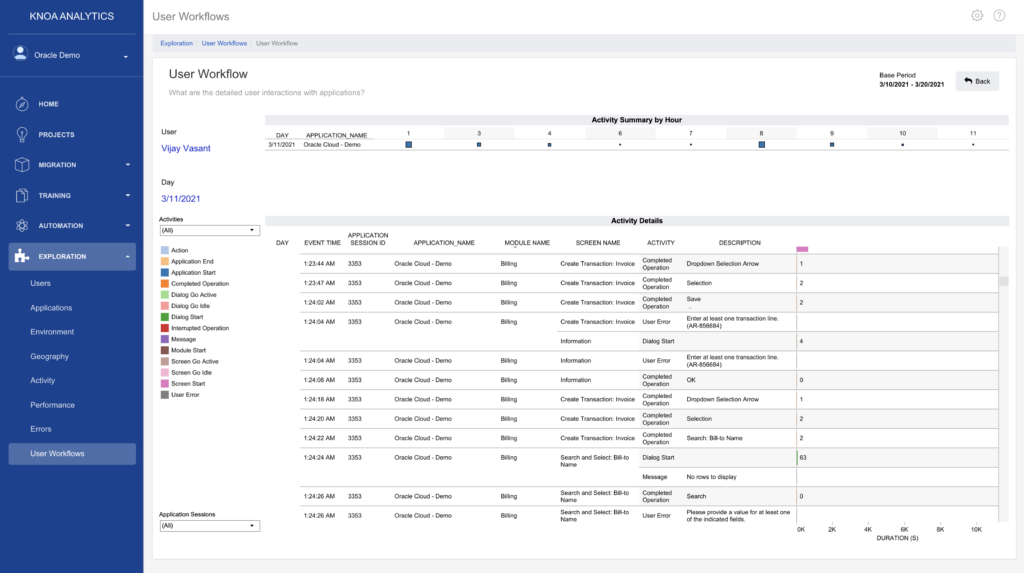

One technique that greatly helps the support team is having access to real-time information about the user journey through the Oracle applications. This information is vital across the entire support cycle:

- Triage – validate real from perceived issues: is the system indeed performing poorly, preventing users from completing their tasks, or is the user simply confused or unaware about certain functionality?

- Prioritize – is the same issue encountered by other users, suggesting a systemic problem?

- Replicate – what are the actions users took before, during and after an issue was encountered, so you can easily replicate issues?

- Resolve – could you identify the root cause of an issue faster if you knew exactly where, when, and how it happened?

- Validate – how do you know that your remediation plan solved the issue?

The “User Workflow” report provides this type of information in real-time: every important user action, at any time, on every Oracle Cloud screen, in any environment. Does your support team currently spend hundreds of hours chasing down elusive issues, hard to reproduce, even harder to resolve? This report brings the X-ray into the diagnostic room.

Final Thoughts

Managing an Oracle Cloud upgrade, or any other upgrade for that matter, doesn’t need to be a painful experience, fraught with frustration. By focusing on the end user experience, you can change your odds of success. Here is a thought experiment: next time you’re wrestling with a problem induced by an upgrade, ask yourself if having access to user behavior data could help.